The missing layer in every agentic AI stack

Salesforce told you who they are and where Claude fits in. They didn’t tell you who the signal layer is.

In February, Salesforce and Anthropic shipped the second phase of a partnership that boardrooms read as the cheat code their VP of Sales has been promising. Agentforce 360 now lives inside Claude through MCP Apps. Claude lives inside Slack. The press releases talked about trusted business context and AI actions. The keynote version of the story is that AI is about to close your deals.

The actual announcement is more honest than that. The lead customer they put on stage — RBC Wealth Management — said it directly: AI shouldn’t make financial decisions on your behalf, but it can simplify routine questions. The official examples are meeting prep, client portfolio summaries, consent tracking. The boring admin tape that eats your team’s Tuesdays.

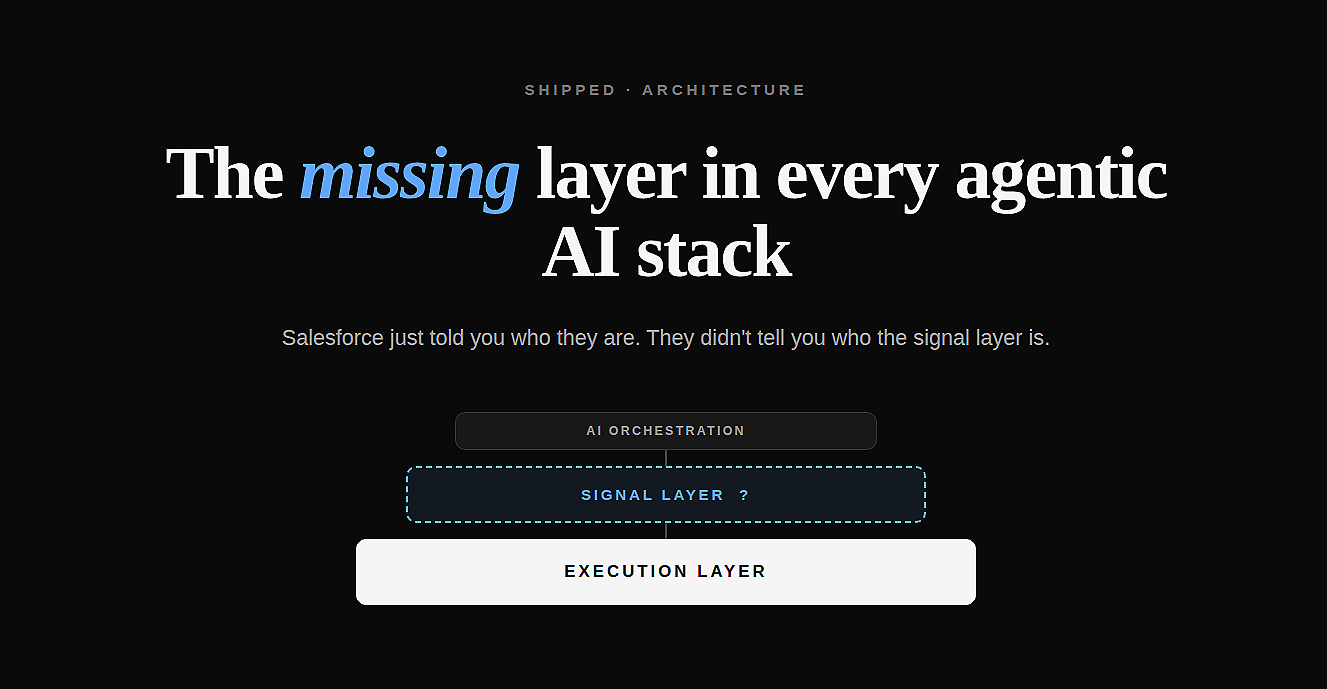

Buried in the architecture diagram is a phrase worth lingering on. Salesforce is, in their own words, the execution layer. That’s a specific architectural claim. It says: bring whatever AI workspace you like, explore in Claude, take action through us. We are where the governed work happens.

That phrase only makes sense if there is something upstream. If you are the execution layer, somebody is presumably the signal layer.

Conspicuously, nobody is.

This isn’t just CRM

The same gap shows up everywhere people are shipping agentic AI right now. Sales is the loudest example because the data is the most embarrassing — a stage field, a close-date field, and three lines of “good meeting, will follow up.” But the pattern repeats.

A clinical AI agent assumes the patient’s actual story — symptoms mentioned in passing, family history, the moment the patient hesitated — is sitting in the EHR. It isn’t. It’s in the doctor’s head and maybe in two lines of free text.

A trading agent assumes the analyst’s view — the conviction level, the unstated comp, the political read on management — is captured somewhere. It isn’t. It’s in a Slack thread and a 1:1 conversation that ended last Thursday.

An engineering agent assumes the design intent — why this architecture, what was traded off, what was rejected — is in the codebase or the wiki. It isn’t. It’s in a Notion doc that got abandoned three quarters ago.

In every one of these domains, the execution layer is real, the AI orchestration layer is plausible, and the signal layer is missing. The signal layer is where the actual thing that happened gets turned into structured, retrievable, citable input data. Almost no organization has it. Almost no vendor is building it explicitly.

The articulate-garbage failure mode

What happens when teams ship agentic AI on top of thin signal? The agents don’t fail loudly. They fail confidently.

They produce paragraphs of plausible analysis based on the three fields and the two activity logs that actually exist. They cite “the data” as if there were data. They surface recommendations that match what someone hoped would be true rather than what’s happening. The output is fluent, structured, and wrong.

The diagnostic is uncomfortable but useful. If your AI deployment is producing more activity but not more outcomes, you don’t have a model problem — you have a signal problem. The agent is acting on noise because noise is what’s in the system. Better models do not fix this. The next generation of models won’t retroactively populate the inputs that were never captured. They’ll just hallucinate more eloquently.

This is why so many enterprise AI rollouts look impressive in the demo and disappointing six months later. The demo runs on hand-curated data. Production runs on the actual data, which is mostly absent.

What a signal layer actually requires

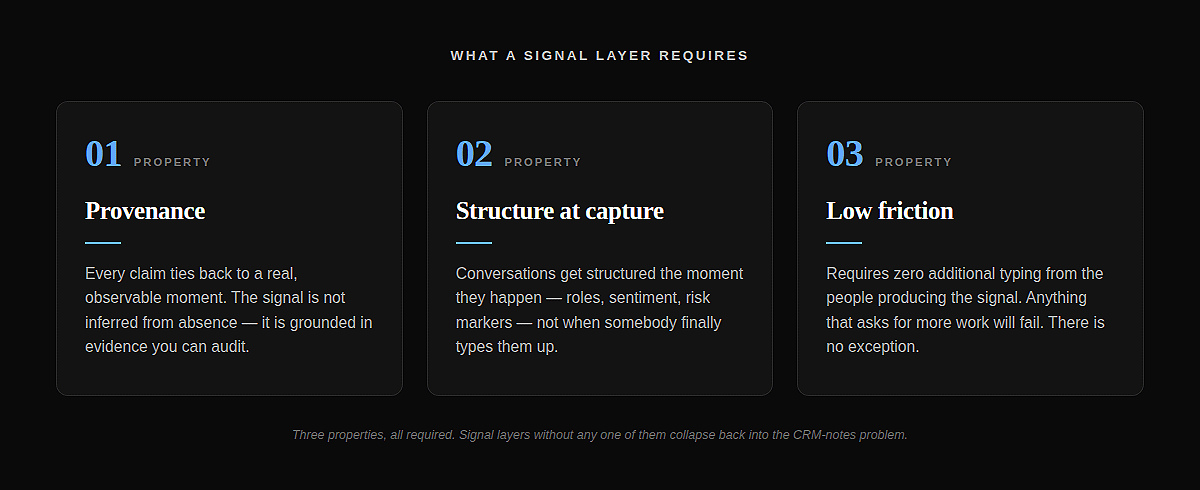

If you accept that the signal layer is the missing piece, three properties define one that works.

Provenance. Every claim the system makes ties back to a real, observable moment. The signal “this account has stakeholder coverage risk” can be traced to a specific conversation where two new names appeared and the existing champion went quiet. Not inferred from absence — grounded in evidence. Provenance is what turns AI output from a confident guess into something you can audit.

Structure at capture time. Conversations, meetings, documents, code reviews — these are unstructured by default. A signal layer structures them at the moment of capture, not at retrieval. Roles get extracted. Sentiment gets quantified. Risk markers get tagged. The work happens once, automatically, when the conversation occurs. Retrieval is then just lookup.

Low friction. This is the kicker, and it’s where almost every “let your team add context” feature has failed for the last decade. People don’t log things because logging is work and the reward is invisible. Signal layers that solve the friction problem — voice capture, ambient extraction, no-typing-required structuring — get to be the layer that matters. Signal layers that ask for more typing fail. There is no exception to this.

The interesting question

Salesforce’s positioning is honest in a way that’s easy to miss in the marketing. They’re telling you what they are and what they aren’t. They are the system of record and the execution layer. They are not the signal layer. They probably can’t be — their data model was designed for forecast meetings, not for agents.

The question for everyone building agentic AI right now is who builds that missing layer. My read is that it’s not a feature of the CRM, and it’s not a feature of the model. It’s its own layer, and it lives close to where the conversations and observations actually happen — not where the forecast lives.

Whoever builds it well becomes the precondition for every interesting agentic product in their domain. That’s a position worth thinking about.

The author is building Auron — an AI-powered voice and conversation intelligence platform that captures and enriches organizational knowledge from meetings, calls, and conversations. Auron turns every interaction into structured signal that teams can act on.