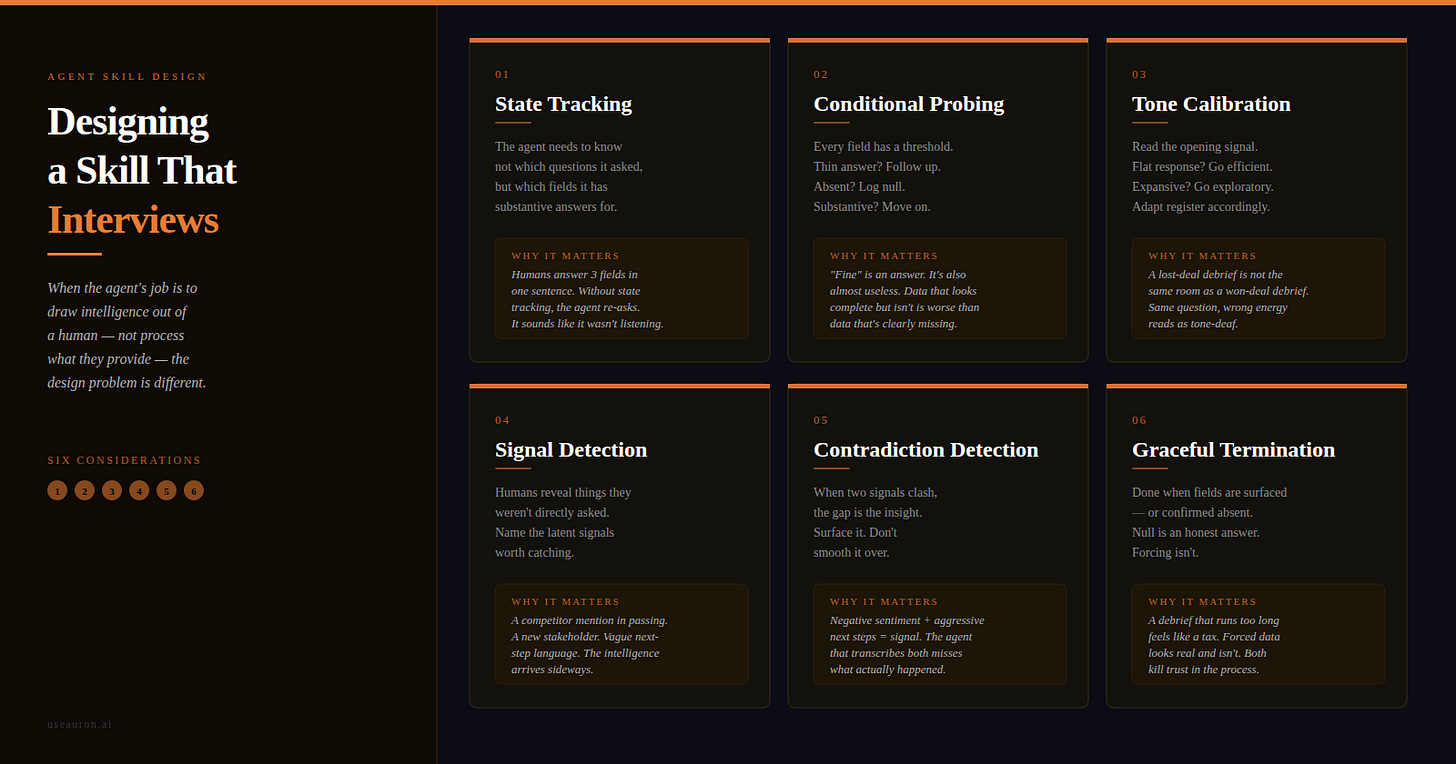

Designing a Skill That Interviews

When an AI agent’s job is to engage with a human and extract structured intelligence, the design problem is fundamentally different. Here’s what is required — and why each consideration matters

Some skills are built to process. Give them a document, a dataset, a prompt — they transform it into something useful. The human is in control. The agent executes.

But there’s a different class of skill where that relationship inverts. The agent’s job isn’t to process information the human provides. It’s to extract information the human holds — knowledge that’s still warm, still unstructured, still sitting in someone’s head after a call, a meeting, a decision. The agent has to draw it out through conversation.

This matters more than it sounds. Post-call debriefs. Customer discovery interviews. Project retrospectives. Onboarding intakes. Performance conversations. Every one of these is a knowledge-capture moment where the intelligence exists — it just hasn’t been structured yet. The agent’s job is to change that.

Designing this kind of skill — call it an interview skill — requires a set of considerations that most skill-design thinking hasn’t named. Here they are, one by one, with the reasoning behind each.

Consideration 01 · State Tracking

The natural impulse when designing a structured debrief or intake is to build a question list. Ask these five things, in this order, fill in these five fields. It looks clean. It looks complete.

The problem is that humans don’t answer in the sequence you designed. A rep might open with: “Really good — they loved the integration story, asked about pricing twice, and we’re doing a technical demo next Tuesday.”

That’s three of your five fields answered before you’ve asked a single question.

If the agent is tracking which questions it has asked, it will proceed to ask all of them anyway, including the ones already answered. It feels robotic because it is — there’s no awareness of what’s already known.

The right question isn’t “have I asked this?” It’s “do I have a substantive answer to this field?” Those are different questions, and they need different logic.

State tracking means the agent maintains a live model of what has been disclosed versus what remains open. Not a checklist of questions fired, but a map of fields resolved. The human can cover three things in one breath. The agent needs to register all three, update its state accordingly, and only ask about what remains genuinely unknown.

Without this, every interview skill degrades into an awkward interrogation — the agent asking questions the human already answered, the human noticing they weren’t listened to.

Consideration 02 · Conditional Probing

Not all answers are created equal. An agent asks how a meeting went and gets: “Fine.” Fine is an answer. It’s also almost useless. But without probing logic, the agent accepts it, moves on, and logs sentiment as neutral.

A good interviewer knows that “fine” is a thin answer. It knows that thin answers need follow-up. It knows, specifically, what follow-up is appropriate: fine how? Cautiously positive, or politely disengaged? That question changes the entire picture of the deal.

The skill document needs to define — for each field — what a substantive answer looks like, and what the agent should do when the answer falls short. There are three states an answer can be in: substantive (accept and move on), thin (probe with a follow-up), or absent (acknowledge, log null, don’t push).

The failure mode of skipping this is data that looks complete but isn’t. Sentiment logged as “positive” because the rep said “it went well.” Next steps recorded as “follow-up” with no owner, no deadline. The fields got filled. The intelligence didn’t get captured.

Conditional probing is how an interview skill stays honest about what it actually knows.

Consideration 03 · Tone Calibration

A debrief after a lost deal is not the same conversation as a debrief after a signature. The structured output you’re trying to capture might be identical — the questions might even be the same. But the emotional context is completely different, and a skill that ignores this will fail in practice.

When someone opens with a clipped, flat response, that’s signal. They’re not in a state to explore; they’re in a state to report and move on. Pushing hard for texture reads as tone-deaf. The agent needs to go lighter, faster, and give the person an easier path through the conversation.

When someone opens with energy — unprompted detail, colour, emotion — that’s the opposite signal. They want to talk about it. The agent should lean in, let them run, ask questions that open the story rather than close it down.

The opening response is the interview’s first data point. It’s not just the answer to the first question — it’s a reading of the room.

Tone calibration doesn’t require the agent to be a therapist. It requires the skill to give the agent explicit guidance on what to listen for in the opening, and what to do with it. A simple heuristic — flat, go efficient; engaged, go exploratory — already covers most cases. Without any guidance here, the agent treats every conversation the same. That’s when it starts to feel like a form.

Consideration 04 · Signal Detection Mid-Conversation

The most valuable information in a debrief is often the information that wasn’t asked for.

A rep mentions, in passing, that the prospect’s IT team joined the call. They didn’t flag this as significant — they just said it happened. But IT joining a commercial conversation is a signal: someone is evaluating technical fit, which means the deal may be further along than the CRM record suggests.

A rep describes the champion’s reaction and uses the word “surprised.” That’s worth noting — surprised by what? Did something land unexpectedly well? Did something reveal a gap?

A rep says they’ll “follow up at some point” rather than naming a date. The next step is vaguer than it should be, and the rep may not have left with a firm commitment.

None of these signals were in the question list. All of them are worth capturing. A skill that only asks about what it planned to ask about will systematically miss the intelligence that arrives sideways.

The skill document needs to name the latent signals worth listening for — not just the structured fields worth filling. A short list of patterns the agent should recognise: competitor mentions, stakeholder changes, timeline language, commitment language. The signals that practitioners know matter, written down so the agent can recognise them too.

Consideration 05 · Contradiction Detection

Sometimes the most important thing in a debrief isn’t what was said — it’s the gap between two things that were said.

A rep reports the call was difficult. They also report a technical evaluation is booked for next week. Those two things don’t easily fit together. Difficult calls don’t usually produce technical evaluations. Something is happening in the deal that the rep hasn’t articulated, and possibly hasn’t fully processed.

An agent with no contradiction logic will transcribe both pieces of information faithfully and move on. The structured output will look complete. But the insight — the deal has unexpected momentum despite a hard conversation, which is actually a positive signal — will be invisible.

A well-designed interview skill knows which contradictions are worth surfacing. Not all of them — that would feel adversarial. But the ones that carry genuine signal: sentiment that doesn’t match next-step energy, objections that didn’t slow things down, timelines that suddenly shifted.

When the agent spots one, it doesn’t interrogate. It reflects gently: “You mentioned the call was tough — but it sounds like there’s still real momentum. What’s driving that?” That question often unlocks the most useful intelligence in the entire conversation. The contradiction was the clue.

Consideration 06 · Graceful Termination

Most skill design focuses on how to start and how to proceed. Almost none thinks carefully about how to stop.

In a processing skill, termination is obvious: the output is generated, the task is done. In an interview skill, it’s more subtle. The conversation ends when one of three things is true: all fields have substantive answers, remaining fields have been confirmed as genuinely unknown, or the human has given enough that continuing would feel extractive rather than useful.

Each requires a judgment call — not just a completion check. Without explicit guidance on when to stop, agents fall into two failure modes: ending too early because they ran out of scripted questions, or running too long because they haven’t confirmed that remaining fields are unknown rather than just unanswered.

Graceful termination also includes what happens to unanswered fields. Log null explicitly — as “not surfaced,” not as empty. Empty and unknown are different. One is a data gap; the other is confirmed absence. The distinction matters downstream.

Null is an honest answer. Forcing a field that wasn’t there produces data that looks real and isn’t. That’s worse than missing data.

The ending also shapes whether the human trusts the process enough to do it again. A debrief that goes on too long feels like a tax. One that cuts off cleanly — acknowledging what was captured, noting what couldn’t be pinned down — feels like a useful conversation. That difference compounds over time.

These six considerations aren’t a checklist to bolt onto a skill document. They’re the architecture of what makes a conversational agent actually work as an interviewer rather than a form with a voice.

The underlying challenge is that conversation doesn’t submit to scripts. People are non-linear. They loop back, jump ahead, say important things in passing, give thin answers to important questions. A skill designed to interview needs to meet people where they are — not march them through a sequence.

Getting this right doesn’t require exotic technology. It requires being precise about the problem: the agent’s job is to draw out what’s known, recognise what’s been revealed, and know when it has enough. Writing that down — explicitly, for each field, with the edge cases named — is the work. Most teams skip it. That’s why most interview skills feel like bad forms.

The author is building Auron — an AI-powered voice and conversation intelligence platform that captures and enriches organisational knowledge from meetings, calls, and conversations. Auron turns every interaction into structured signal that teams can act on.